1. Create a new PySpark project

This is a step-by-step introduction intended for beginners.

If you are already familiar with the basics of Conveyor, our how-to guides are probably more appropriate.

In this tutorial, you will create and deploy a new batch processing project using PySpark, the most popular framework for distributed data processing in Python. The project will be scheduled to run daily and integrated with various cloud services such as AWS Glue and S3.

The principles covered by the tutorial will apply to any other development stack.

1.1. Set up your project

If you have not already done so, you will need to set up the local development environment.

For convenience, let us define some environment variable for the rest of the tutorial.

Use your first name, the name should not contain any special characters like underscore or dashes. For example john would be a good name.

Please open your terminal an execute the following commands:

export NAME=INSERT_YOUR_NAME

export PROJECT_NAME=$NAME

export ENVIRONMENT_NAME=$NAME

1.1.1. Create the project

Any batch job in any language can run on Conveyor, as long as there is nothing that prevents it being dockerized. For convenience, we provide ready-to-go templates for batch jobs using vanilla Python, PySpark and other languages and frameworks. Use of these templates is optional.

For this tutorial, we will use the PySpark template:

conveyor project create --name $PROJECT_NAME --template pyspark

For this tutorial, select the following options for your project (other options should be left on their default settings):

- conveyor_managed_role:

No - project_type:

batch(Unless you want to test out PySpark streaming, then enterbatch-and-streaming, see streaming) - cloud:

aws(If you are using Azure, you can update it accordingly)

It takes a few moments to create the project. The result is a local folder with the same name as the project. Let's have a look inside.

1.2. Explore the code

Have a look at the folder that was just created and identify the following subfolders.

cd $PROJECT_NAME

ls -al | grep '^d'

This should show you the following directories:

.conveyorcontains Conveyor-specific configuration.dagscontains the Airflow DAGs that will be deployed as part of this project. Here you define when and how your project will run.srccontains the code that will be executed as part of your project.testscontains the unit tests for the source code.Dockerfiledefines how to package your project code as well as the versions for every dependency (Spark, Python, AWS, ...). We supply our own Spark images that can run on both AWS and Azure, more details about the images can be found here.

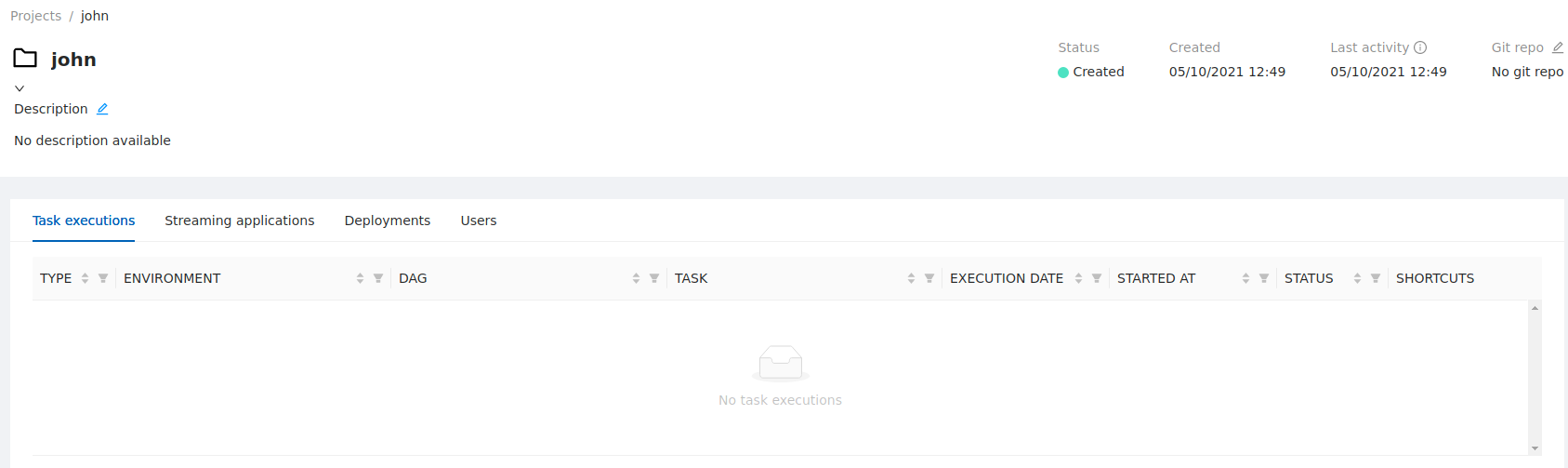

1.3. Explore the UI

In the Conveyor UI you can find your project under the projects menu on the left. On the left select projects and find your project in the list. Clicking on it will show you the details. Use this opportunity to update the description.